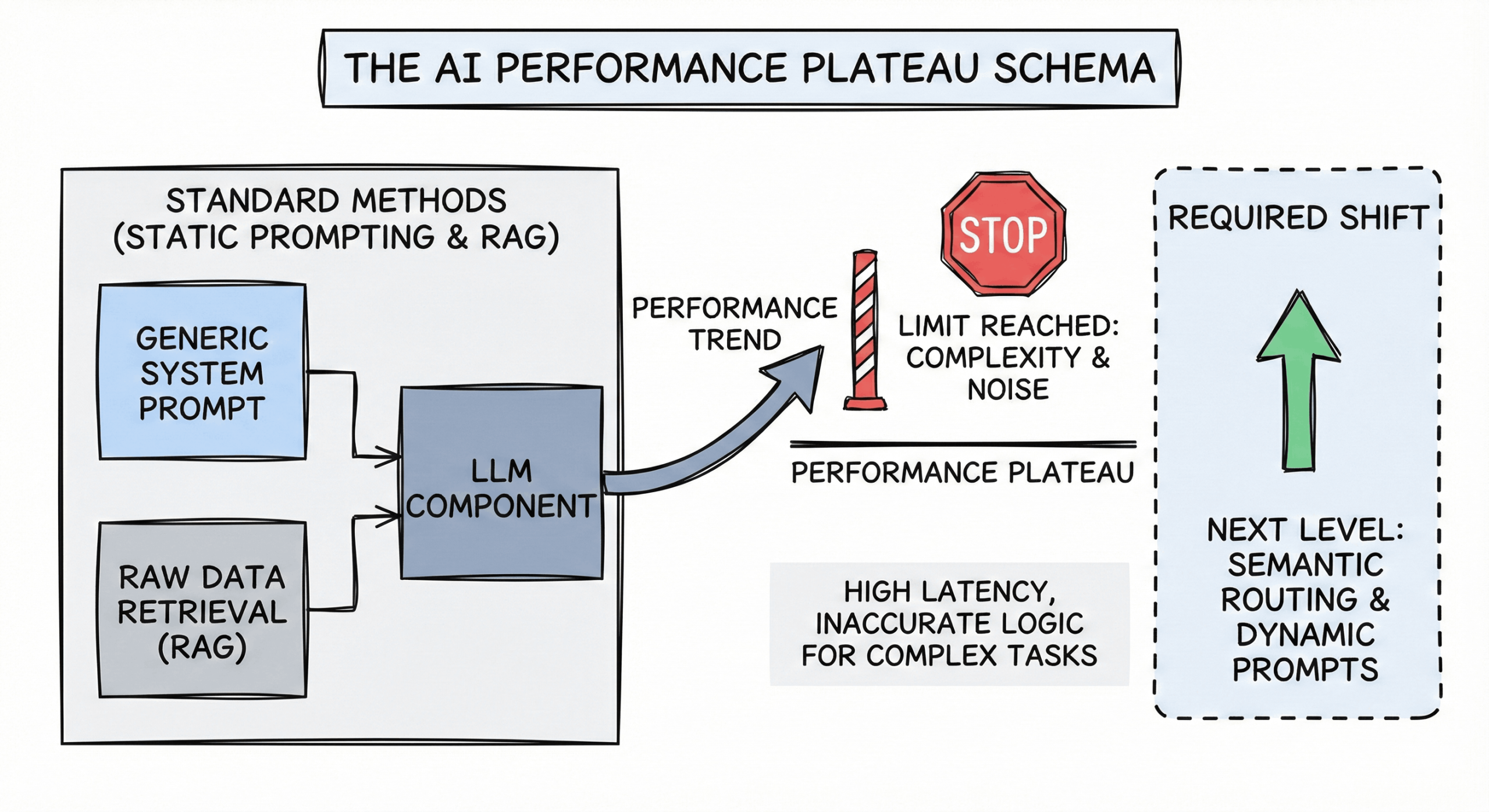

Introduction: The Performance Plateau

In the rush to build AI agents, the industry adopted two standard patterns: Static Prompting (one massive system prompt) and Standard RAG (retrieving raw data chunks). While these methods work for basic tasks, they are hitting a performance wall in complex production environments.

Engineers are finding that “more context” doesn’t always equal “better answers.” In fact, irrelevant data often degrades logic.

This post explores the architectural shift validated by recent research (including arXiv:2512.04106): moving from static data retrieval to Semantic Routing and Dynamic Prompt Selection.

The Bottleneck: Why Static RAG Isn’t Enough

To optimize performance, we first need to distinguish between Knowledge and Reasoning.

- Standard RAG is excellent for Knowledge. (e.g., “What is the policy on refunds?”)

- Standard RAG is inefficient for Reasoning. (e.g., “Analyze this code for vulnerabilities based on OWASP standards.”)

When you force an LLM to “learn” how to reason by retrieving 10 chunks of raw documentation, you introduce noise. This leads to:

- Higher Latency: Processing thousands of unnecessary tokens.

- Diluted Attention: The model gets distracted by irrelevant facts instead of focusing on the logic.

- Inconsistent Outputs: Without a specific instruction set, the model’s reasoning varies from query to query.

The Solution: Semantic Routing & Dynamic Prompts

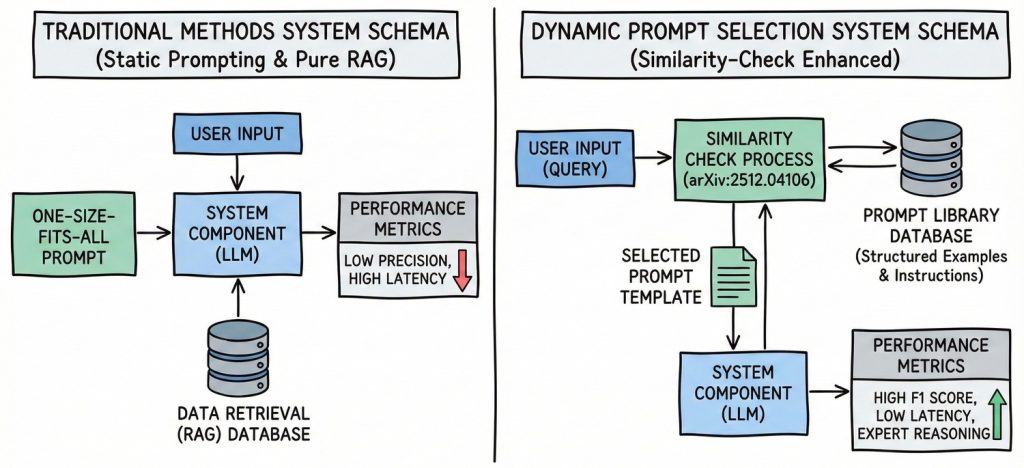

Semantic Routing acts as an intelligent traffic controller. Instead of sending every query to the same generic prompt or the same RAG pipeline, it uses vector similarity to understand the intent of the user, then routes them to a highly specialized Dynamic Prompt.

How It Works (The Architecture)

- The Prompt Library: You build a curated database of “Golden Prompts”—highly optimized instructions, few-shot examples, and reasoning chains—indexed by intent.

- The Similarity Check: When a user query arrives, a lightweight embedding model compares it to your library.

- Context Injection: The system retrieves the exact reasoning template required (e.g., “Python Debugging Template”) rather than a generic “You are a coding assistant” prompt.

The Gains: What the Data Shows

Shifting to this dynamic architecture yields measurable improvements:

1. F1 Score & Accuracy

Research indicates that for complex logical tasks, retrieving a specific “reasoning pattern” (Few-Shot examples) outperforms retrieving raw data. In vulnerability detection benchmarks, Retrieval-Augmented Prompting has shown to double performance compared to zero-shot baselines, reaching F1 scores of 74%+.

2. Latency & Cost Optimization

By retrieving a precise 500-token instruction template instead of 5,000 tokens of messy RAG documents, you significantly reduce Time to First Token (TTFT). This also lowers API costs by keeping context windows lean.

3. Separation of Concerns

Dynamic Prompting allows you to treat prompts like software functions. You can update your “SQL Generation” prompt without risking breaking your “Creative Writing” prompt.

Conclusion: When to Use Which?

This isn’t about abandoning RAG; it’s about using the right tool for the job.

- Use Standard RAG when you need to answer specific factual questions from a large dataset.

- Use Semantic Routing (Dynamic Prompts) when you need to optimize the logic, format, or behavior of the model.

The future of high-performance LLMs isn’t just about accessing more data—it’s about accessing the right context at the right time. By implementing Semantic Routing, you move your AI from a static, generalist tool to a dynamic, specialized expert.